Agentic AI for Data Workflows:

The Complete Guide to

Automating Pipelines and Decisions

Table of Contents

What Is Agentic AI for Data Workflows and Why It Matters Now

Agentic AI for data workflows transforms how enterprises collect, transform, and act on data replacing brittle, engineering-heavy pipelines with autonomous systems that perceive, plan, and execute in real time. Understanding how agentic workflows work is the first step toward replacing legacy process automation with intelligent, autonomous workflows that adapt in real time. This guide explains what Agentic AI data workflows are, how they work, how they compare to traditional automation, and how your organization can implement them.

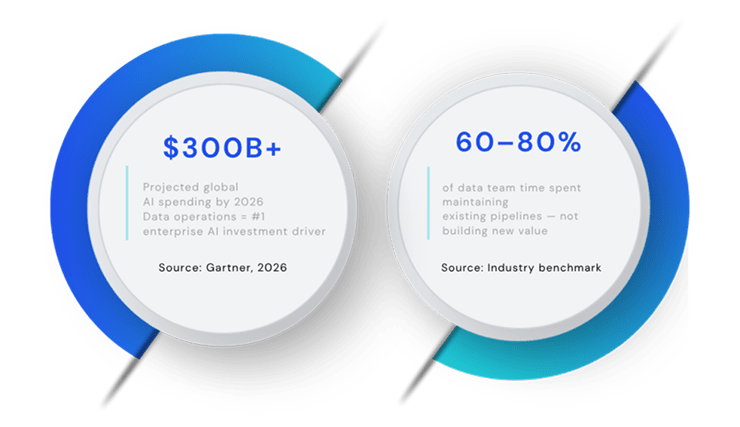

Global spending on artificial intelligence solutions including agentic workflows and workflow automation is projected to exceed $300 billion by 2026, with data operations cited as the single largest driver of enterprise AI investment. In this AI era, organizations that fail to modernize their data pipelines risk falling behind competitors who have already automated their core operations. Yet most organizations still manage their data pipelines through disconnected tools, manual orchestration, and engineering backlogs that slow decision-making by weeks. modernize their data pipelines risk falling behind competitors who have already automated their core operations. Yet most organizations still manage their data pipelines through disconnected tools, manual orchestration, and engineering backlogs that slow decision-making by weeks.

The problem is structural. Data volume has outpaced the human capacity to manage it. An insurer processing millions of claims events, an energy company monitoring thousands of grid sensors, a financial services firm running hundreds of overnight batch jobs all face the same constraint: pipelines built for a slower, simpler world.

Agentic AI workflows offer a fundamentally different model one where platforms like Arivonix connect, unify, and automate data operations in less than a day, with no upfront infrastructure cost and no deep engineering dependency. This guide is for the CDO benchmarking architectural options, the VP of Data evaluating build vs. buy, and the data engineer who needs to know what’s actually under the hood. Real-world use cases and insights from enterprise deployments are woven throughout to illustrate practical application.

What Are Data Workflows and Why Traditional Automation Falls Short in Modern Data Environments

A data workflow is the end-to-end process by which raw data is ingested, transformed, validated, orchestrated, and delivered as actionable output whether that output is a dashboard, a machine learning prediction, an automated alert, or a triggered business action.

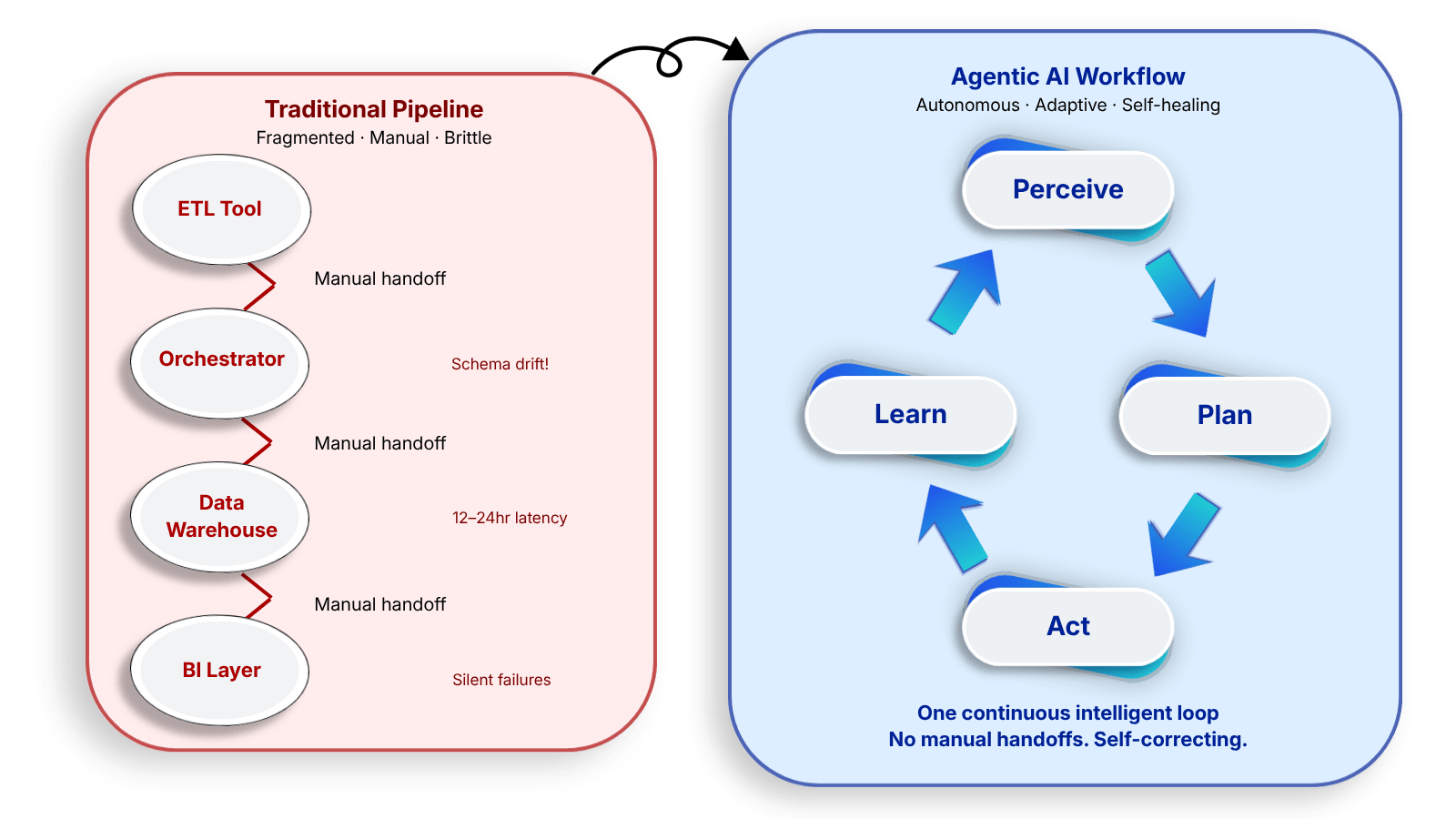

In modern enterprises, this lifecycle spans multiple disconnected systems: ETL tools pulling from source databases, orchestration platforms scheduling batch jobs, data warehouses storing transformed records, BI tools serving queries, and ML pipelines generating predictions. Each layer introduces latency, manual handoffs, and failure points.

The pain points are well-documented. Schema drift breaks downstream pipelines without warning. Pipeline failures require manual triage often hours after the data was needed. Adding a new data source can take weeks of engineering time. Batch processing creates a 12-to-24 hour window where decisions are made on stale data. Data quality issues propagate silently until they surface in production reports.

The result: data teams spend 60 – 80% of their time maintaining existing pipelines rather than building new value. Gartner estimates that poor data quality costs organizations an average of $12.9 million per year a figure that understates the strategic cost of delayed decisions.

Agentic AI for data workflows transforms how enterprises collect, transform, and act on data replacing brittle, engineering-heavy pipelines with autonomous systems that perceive, plan, and execute in real time. Understanding how agentic workflows work is the first step toward replacing legacy process automation with intelligent, autonomous workflows that adapt in real time. This guide explains what Agentic AI data workflows are, how they work, how they compare to traditional automation, and how your organization can implement them.

What Is Agentic AI?

Agentic AI refers to AI systems that can independently reason about goals, plan multi-step actions, execute tasks across external systems, and adapt their behaviour based on real-time feedback without requiring a human to direct each step. Unlike generative AI, which responds to prompts, agentic AI operates autonomously toward a defined objective.

In a data context, agentic automation means a system given the goal ‘ensure data quality in the customer 360 pipeline’ will without further instruction monitor incoming records, detect schema anomalies, trigger remediation workflows, escalate edge cases to a human reviewer, and log all actions for audit. The system perceives, plans, acts, and learns across a continuous loop.

How Agentic Workflows Work: Tools, Stages, and the Four-Stage Loop

Agentic AI data workflows operate through a continuous four-stage loop that replaces static pipeline execution with dynamic, goal-driven orchestration. Understanding this loop is essential to evaluating any agentic platform.

Perceive: Continuous Data Observation

The agent monitors connected data sources in real time databases, APIs, event streams, file systems detecting changes, anomalies, and trigger conditions without polling on a fixed schedule. It maintains context across sessions using short-term memory (working state) and long-term memory (vector store or knowledge graph).

Plan: Goal Decomposition and Task Sequencing

Given a high-level goal for example, ‘refresh customer data product by 6 AM’ the agent decomposes it into a sequence of sub-tasks, selects the appropriate tools and transformation logic, and re-plans dynamically when upstream conditions change. In complex workflows, multi-agent orchestration assigns specialized sub-agents to parallel tasks: one handling ingestion, another running quality checks, another generating the output data product. This agent collaboration between multiple agents enables parallelism that a single agent operating alone could never achieve.

Act: Execution Across Systems

The agent executes transformations, triggers downstream systems, and writes to data warehouses or lakehouses invoking pre-built connectors across cloud and legacy systems without custom engineering. Organizations migrating from legacy Hadoop environments or managing vast data lakes across distributed infrastructure benefit most from this layer, as pre-built tools and integrations eliminate the bespoke connector work that previously consumed weeks of engineering time. Every action is logged with full lineage for audit and governance. Automated compliance checks are embedded directly into agentic workflows, ensuring every transformation and routing decision meets regulatory requirements without additional manual oversight.

Learn: Feedback and Continuous Improvement

The agent evaluates outcomes against the original goal and updates its planning heuristics. It flags low-confidence decisions for human-in-the-loop review before acting, and improves pipeline efficiency over time through reinforcement from operator feedback.

Architecture of Agentic AI Data Workflows: From Single Agent to Enterprise Framework

A production-grade agentic data workflow platform consists of six integrated layers. The absence of any single layer or the presence of seams between them is where fragmentation and operational risk accumulate.

A data fabric creates a unified, real-time semantic view of all data across an organization regardless of where that data lives: cloud, on-prem, data warehouse, operational database, or streaming platform. Unlike a data mesh, which distributes ownership to domain teams, a data fabric provides centralized integration logic with federated data access.

For agentic AI to function effectively, it needs a coherent data fabric underneath it. Without one, agents operate on fragmented, inconsistent data producing unreliable decisions. The data fabric is the connective tissue that makes agentic intelligence possible at enterprise scale, and it is what separates reliable agentic workflows from brittle point solutions.

Agentic AI Use Cases in Data Workflows for Enterprises

The following use cases represent the highest-value agentic AI applications in enterprise data operations ranked by frequency of deployment and measurable operational impact. These agentic workflows are especially effective at automating complex processes that previously required significant manual effort, delivering efficiency gains across the entire data stack.

1. Automated Data Quality Monitoring and Remediation

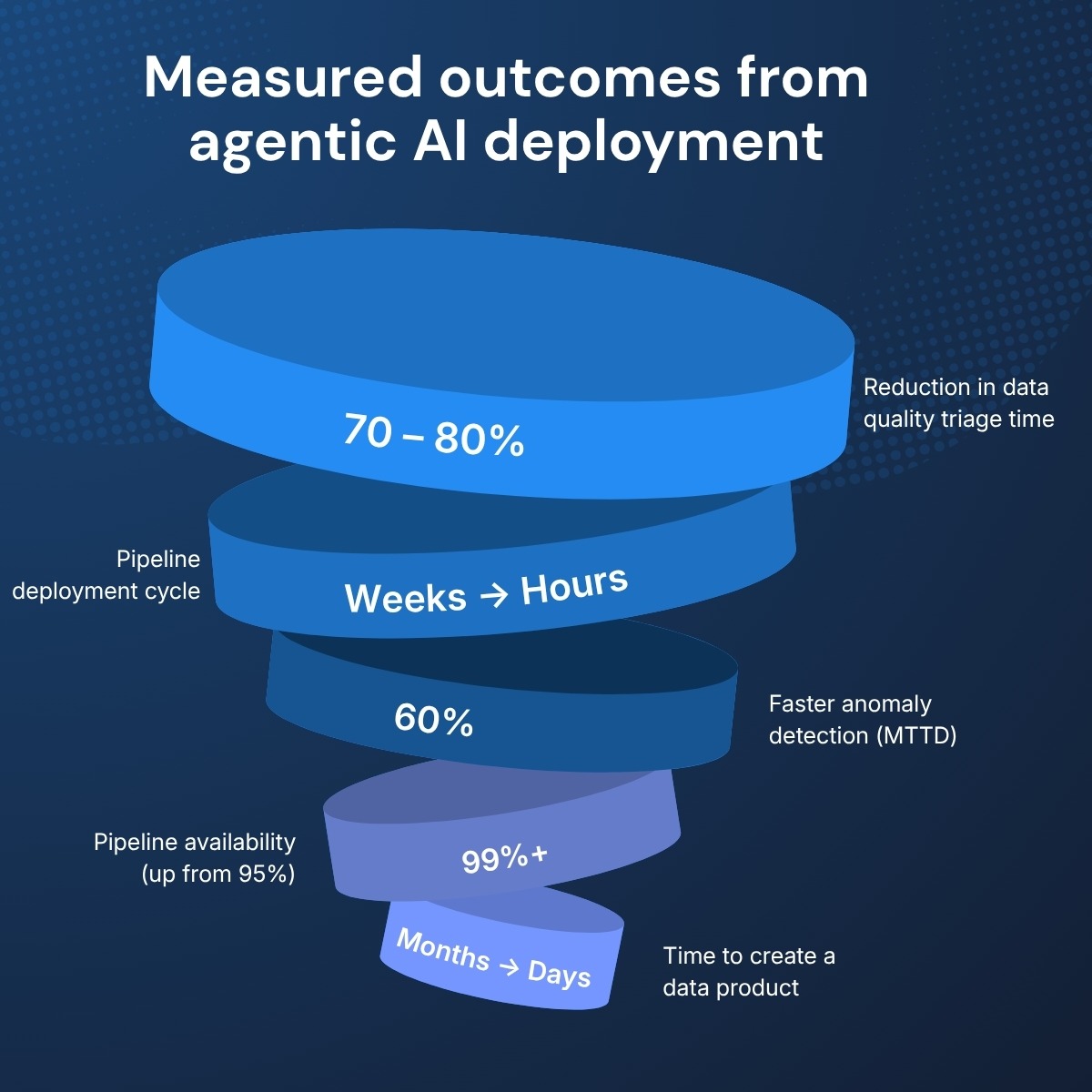

An agentic system continuously monitors incoming data for schema violations, null rates, statistical outliers, and referential integrity failures. When a violation is detected, the agent classifies severity, triggers an automated remediation workflow, and escalates to a human reviewer only when confidence falls below threshold. Outcome: 70 – 80% reduction in manual data quality triage time across financial services, insurance, and healthcare environments.

2. Intelligent ETL Pipeline Orchestration

Traditional ETL pipelines execute on fixed schedules regardless of upstream conditions. Agentic orchestration uses active metadata real-time signals about data availability, freshness, and quality to trigger pipeline execution dynamically. Agents adapt transformation logic when source schemas change, without manual pipeline rewrites. Outcome: Pipeline deployment cycles reduced from weeks to hours in manufacturing, logistics, and digital platforms.

3. Real-Time Anomaly Detection and Alerting

Agents apply LLM-powered reasoning to distinguish genuine anomalies from statistical noise a capability that rule-based monitoring systems cannot replicate. When an anomaly is confirmed, the agent generates a natural-language summary, routes the alert to the correct team, and logs the incident for root-cause analysis. Outcome: Mean time to detect (MTTD) reduced by up to 60% in energy, cybersecurity, and e-commerce.

4. Automated Failure Detection and Pipeline Recovery

When a pipeline stage fails due to source unavailability, schema change, or capacity constraint an agentic system diagnoses the failure, selects a recovery strategy, executes the fix, and resumes execution. Human intervention is required only for novel failure types outside the agent’s existing resolution toolkit. Outcome: Pipeline availability SLAs improved from 95% to 99%+ across all enterprise verticals.

5. Data Product Creation and Monetization

Agentic systems autonomously assemble curated data products from multiple sources applying transformation logic, access policies, and freshness guarantees and publish them to a data marketplace or internal catalog. This enables organizations to monetize data assets without building custom data engineering pipelines for every consumer. Outcome: Time-to-data-product reduced from months to days.

6. Conversational Data Access via Natural Language Processing

AI agents for enterprise data environments enable non-technical users business analysts, product managers, domain SMEs to query complex data stores using natural language. By layering an LLM-powered agent on top of the data fabric, the agent translates intent into SQL or API calls, retrieves the data, and returns a formatted, explainable result without data engineering involvement.

Why No-Code Agentic AI Platforms Are Changing Who Builds Data Workflows

The traditional data workflow stack requires a coordinated team: a data engineer to build the pipeline, an ML engineer to deploy the model, a DevOps engineer to manage infrastructure, and a data analyst to interpret output. For mid-market organizations or enterprise teams with constrained headcount this dependency creates a permanent bottleneck between data availability and business decision-making.

No-code agentic AI platforms eliminate this bottleneck by surfacing pipeline design, agent configuration, and data product publishing through visual interfaces that business analysts, domain experts, and product managers can operate directly without writing a line of code. Organizations that have experience deploying these agentic workflows consistently report faster time-to-value compared to traditional engineering-heavy approaches and product managers can operate directly without writing a line of code.

e.g., Underwriter

- Arivonix Deployment Benchmark

Customers connect their first data source and deploy their first agentic workflow in less than one day with no upfront infrastructure cost and no engineering prerequisite. For mid-market organizations evaluating time-to-value, this compresses a typical 3-to-6 month implementation timeline into a single working day.

Benefits of AI Workflows for Data Teams and the Business

For data engineering teams, the most immediate benefit is operational: a visual workflow builder reduces pipeline development from weeks to hours, self-healing pipelines and automated quality checks cut maintenance burden by 60 – 80%, and new data sources can be onboarded without proportional headcount growth. Teams can reallocate resources previously tied up in manual pipeline maintenance toward higher-value analytical work, delivering measurable efficiency improvements across the organization. McKinsey estimates that data engineering teams spend 40% of their time on data preparation and pipeline maintenance agentic orchestration recovers most of this capacity for higher-value work.

For business and analytics teams, agentic AI delivers real-time intelligence decisions based on current data rather than yesterday’s batch run alongside self-service data access that removes engineering dependency from the analytical workflow. Agentic incident detection compresses root-cause analysis from hours to minutes. The insights generated through this process also feed directly into longer-term optimization strategies, helping data teams continuously improve pipeline performance and reliability.

At the organizational level, built-in lineage, access control, and audit trails support GDPR, CCPA, and sector-specific compliance without bolt-on governance tooling. A pay-as-you-scale model eliminates upfront infrastructure investment. BCG research indicates that enterprises deploying agentic AI in data operations achieve 30–50% acceleration in time-to-decision for data-intensive business processes.

Agentic AI Governance: Auditing Agent Decisions and Managing Risk in Automated Data Pipelines

Autonomous data systems introduce new categories of Autonomous data systems introduce new categories of operational and compliance risk that traditional pipeline governance frameworks are not designed to address. Organizations deploying agentic AI in production data environments should address five risk domains before go-live. Many of the tasks involved in risk assessment and mitigation can themselves be automated through well-configured agentic workflows.

Auditability of autonomous decisions is the foundational requirement. Every action taken by an agent every transformation applied, every record modified, every system triggered must be logged with full context: what the agent perceived, what it planned, what it did, and what the outcome was. Without end-to-end decision lineage, autonomous agents create compliance exposure in any regulated environment. Arivonix provides built-in agent decision logging across all workflow executions.

Data privacy and access control become more complex when agentic systems can read and transform data across multiple systems. Field-level access controls and dynamic permission scoping at the data fabric layer are essential. GDPR Article 25 (data protection by design) and CCPA data minimisation requirements apply directly to agentic systems processing personal data.

Model drift and hallucination risk are inherent in LLM-powered agents. A hallucinated SQL query or incorrectly classified anomaly can corrupt downstream data products. Mitigation requires confidence thresholds below which agents escalate to human-in-the-loop review, combined with regular agent output audits and drift detection on decision quality metrics.

Human-in-the-loop checkpoints are a governance mechanism, not an afterthought. High-stakes actions deleting records, triggering financial transactions, modifying master data should require human approval regardless of agent confidence. Configurable checkpoints allow organizations to define the precise boundary between autonomous execution and supervised action.

Sector-specific regulatory requirements add an additional layer. Financial services (MiFID II, SOX) require full audit trails for data feeding regulatory reporting. Healthcare (HIPAA) applies data minimisation and de-identification requirements to agent-accessed PHI. The EU AI Act subjects high-risk AI systems including those used in credit decisions or fraud detection to conformity assessments and mandated human oversight.

When Should Your Organization Consider Agentic AI Data Workflows?

Agentic AI is not a fit for every data environment. Organizations that implement it before establishing foundational data infrastructure will compound existing problems rather than solve them. The following indicators signal genuine readiness.

Act now if pipeline deployment cycles regularly take 4 – 8 weeks or longer due to engineering capacity constraints; if your data team spends more than 50% of its time on pipeline maintenance rather than new development; if business teams are making decisions on data that is 12+ hours old because batch processing is the only available model; if you have 10+ disconnected data sources that no current platform unifies in a queryable semantic layer; or if data quality incidents are discovered in production rather than caught in the pipeline.

Plan ahead if your organization is beginning to invest in AI models or ML capabilities but lacks the data infrastructure to feed them reliably; if domain teams are standing up shadow data systems because they cannot get timely access from the central data team; or if you are preparing for a regulatory environment requiring full data lineage and audit trails EU AI Act, DORA, or sector-specific rules.

How to Implement Agentic AI in Your Data Stack: A 5-Stage Roadmap

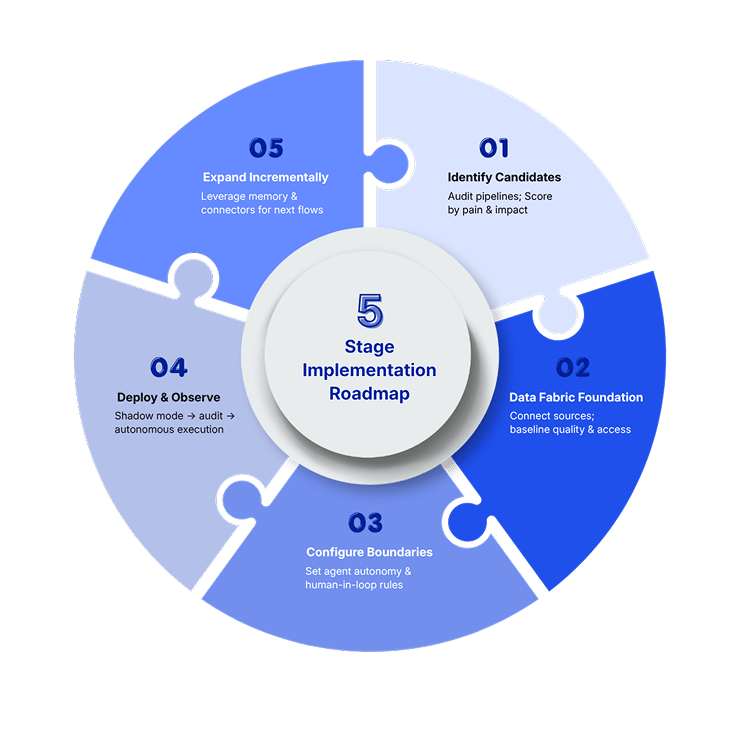

The organizations that successfully deploy agentic AI share a common pattern: they start with a contained, high-value use case, prove the model, and expand. The following five-stage roadmap reflects this pattern. From automating simple tasks to orchestrating complex AI workflows, the progression of agentic workflows within an enterprise follows a consistent and repeatable path.

Stage 1: Identify High-Value Automation Candidates

Audit your current pipeline portfolio and score each pipeline against two dimensions: operational pain (how much engineering time does it consume?) and business impact (how directly does it feed decisions the business depends on?). Start with the pipeline at the intersection of highest pain and highest impact.

Stage 2: Establish the Data Fabric Foundation

Before deploying agents, ensure your data fabric layer provides a consistent semantic view of the data the agent will operate on. Agents operating on inconsistent, poorly governed data will produce unreliable outputs. World data governance standards increasingly require organizations to document how data flows through automated systems, making this foundation stage non-negotiable. This stage breaks down into step tasks: source connection, schema mapping, data quality baseline, and access control configuration. These tasks are foundational to enabling reliable agentic workflows at scale. With Arivonix, this takes less than one day for most mid-market environments.

Stage 3: Configure Agent Boundaries and Human-in-the-Loop Rules

Define what the agent is permitted to do autonomously versus what requires human approval. Set confidence thresholds for each action class (transform, route, alert, escalate). Define escalation paths for edge cases. This configuration not the agent itself is the primary governance mechanism.

Stage 4: Deploy, Observe, and Tune

Run the agent in shadow mode for two to four weeks executing its planned actions but not committing them so you can audit decision quality before going live. Once quality benchmarks are met, switch to autonomous execution with full logging enabled.

Stage 5: Expand the Scope Incrementally

Once the first agentic workflow is stable, use the data and governance foundation you have built to onboard additional pipelines. Each subsequent workflow benefits from the accumulated agent memory, connector library, and quality baselines established in prior stages. This continuous learning capability is one of the defining advantages of agentic workflows over static pipeline automation.

Why Arivonix Leads Among Agentic AI Data Platforms for Workflows

Arivonix is an agentic AI and data fabric platform purpose-built for enterprise data management and monetization. Unlike point solutions that address one layer of the data stack, Arivonix unifies data ingestion, data fabric, multi-agent AI, and data product delivery in a single no-code platform enabling organizations to go from raw data to automated, AI-driven decisions without stitching together multiple vendors.

The Future of Agentic AI in Data Workflows

Three structural shifts are defining the next phase of agentic AI in enterprise data operations. For a deeper look at each of these trends, the Arivonix blog publishes ongoing insights and analysis on the evolution of agentic workflows in enterprise data environments.

The DataOps movement promised to apply DevOps principles to data pipeline management. Agentic AI is completing that transition moving from ‘automated pipelines’ to ‘autonomous operations’ where agents not only execute workflows but monitor, optimize, and govern them without continuous human oversight. This is the emerging discipline of AIOps for data.

The next generation of data platforms will be built with LLM-powered reasoning at their core not added as a layer on top of a traditional stack. This means the architecture described in this guide data fabric + multi-agent orchestration + no-code interface represents the destination, not an intermediate step.

As organizations mature their data fabric infrastructure, federated, agent-driven data ecosystems will become possible at scale: domain teams operating their own data products within a governed, agent-connected ecosystem. This is the convergence point of data mesh architecture principles and agentic AI execution domain ownership with centralized governance and autonomous integration.

Getting Started with Agentic AI Data Workflows

The transition from static, engineering-heavy data pipelines to autonomous, agentic workflows is not a distant trend it is happening now, across industries, in organizations that have decided to stop managing data manually and start letting intelligent systems do it better.

The operational case is clear: faster pipelines, fewer incidents, lower maintenance burden, and data products that reach the business in hours rather than weeks. Organizations with experience running AI workflows report not just operational improvements but also a compounding strategic advantage as their data infrastructure becomes faster, smarter, and more autonomous over time. The strategic case is equally compelling: organizations that build a real-time, AI-ready data fabric today will make faster, better-informed decisions than competitors still running on batch-processed, siloed data.

Arivonix is built for organizations that are ready to make this transition whether you are a mid-market company deploying your first agentic workflow or an enterprise standardizing multi-agent orchestration across a complex data estate. Either way, well-designed agentic workflows deliver measurable outcomes from day one.

Ready to see Arivonix AI in action?

Start a free trial and deploy your first agentic data workflow in less than a day, with no upfront cost and no engineering prerequisite. Or book a demo for a personalized walkthrough.