Data Fabric:

The Definitive Guide to

Modern Data Architecture

Table of Contents

What Is Data Fabric?

Data fabric is an architectural approach to data management tasks and a data management design that creates a unified, intelligent layer spanning multi-cloud, hybrid, and on-premises environments. Rather than forcing business data to move to a central location, it connects disparate data sources in place enabling consistent data integration, data governance, and real-time access across every system an enterprise operates. It manages the full data lifecycle from ingestion through to consumption, keeping business data in context at every stage.

- Unified Data Access: Consistent, governed access to data across all environments cloud, on-premises, and edge.

- Active Metadata Intelligence: The fabric continuously reads, updates, and acts on metadata to automate pipelines, flag quality issues, and enforce policies.

- Real-Time Integration: Data is available for analytics and AI workloads as it is generated not hours or days later.

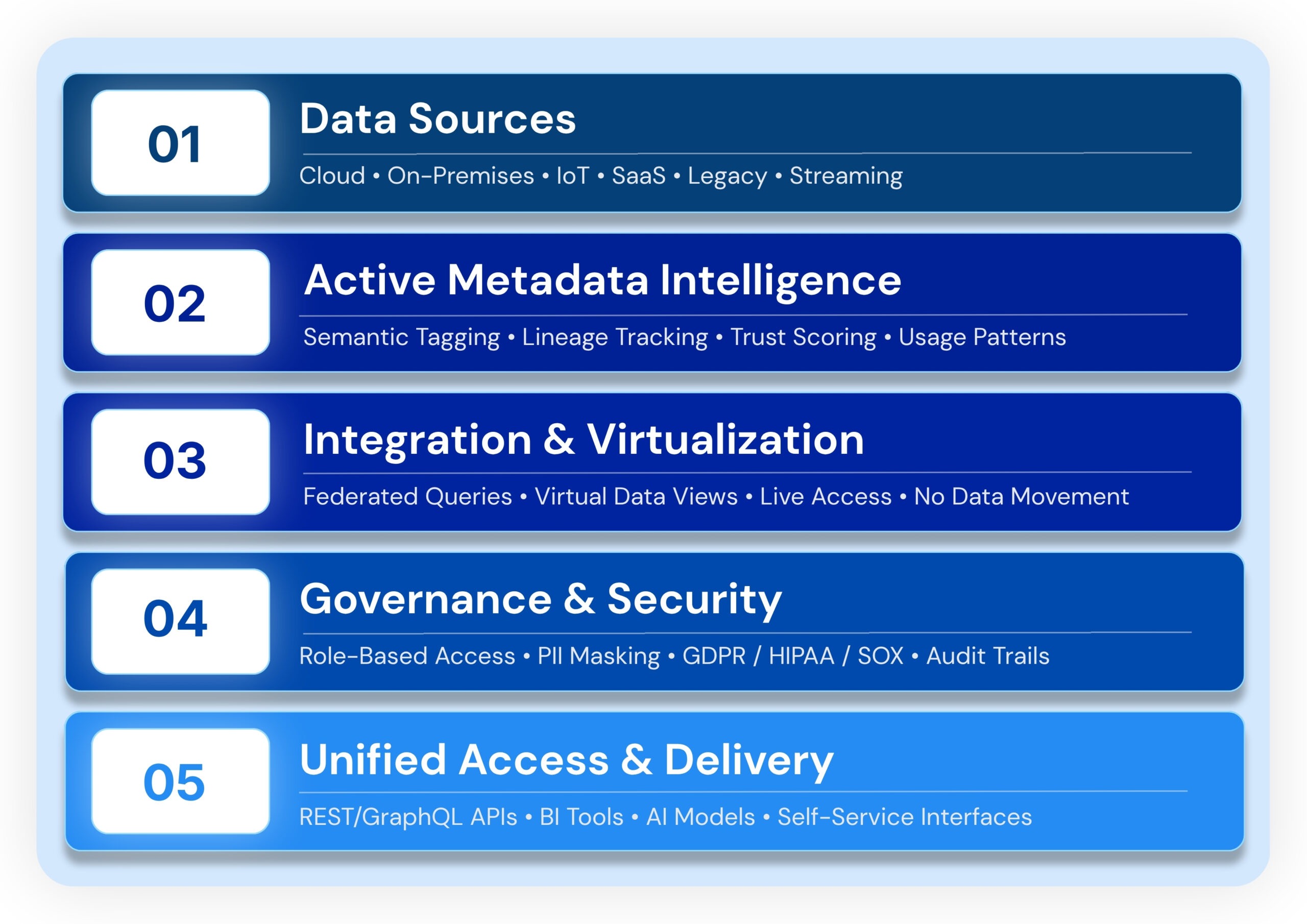

How Data Fabric Works: Data Fabric Architecture and Layers

A data fabric operates through a layered architecture that automates data discovery, integration, governance, and delivery of data across the enterprise. At its core, an augmented data catalog powered by metadata management continuously monitors assets tracking data movement, data processing, and data streaming events across all connected storage systems. The active metadata layer is what separates modern data fabric solutions from earlier integration approaches it tracks how data behaves and uses that intelligence to automate governance and surface relevant assets.

Key Problems Data Fabric Solves

- Data silos: Separate stores across business units with no consistent access model block cross-functional insight.

- Governance fragmentation: Policies in one system have no enforced equivalent in another, creating compliance risk and audit exposure.

- Integration lag: Traditional ETL data pipelines can’t keep pace with real-time demands of AI workloads and live digital services.

- Lack of fluidity across data environments: Without fluidity across data environments, teams working with different data sources resort to manual exports and shadow IT that multiply data risks.

- Data consumers underserved: Without governed self-service data access, consumers wait days for extracts instead of accessing data products directly.

Data Fabric vs. Data Mesh vs. Data Warehouse vs. Data Lake

These architectures are not mutually exclusive. Many mature organizations run data fabric alongside a data mesh using the fabric’s integration layer to support domain-level data products. A decentralized data architecture like data mesh defines who owns the data; data fabric defines how it is connected and governed. Cloud data warehouse solutions remain valuable for structured analytics but struggle with the real-time, multi-source demands of modern AI workloads

When to Choose Data Fabric

Data fabric is the right choice when business data is distributed across multiple systems or clouds. It fits enterprises that need real-time availability for AI or operational workloads, consistent data governance across a heterogeneous estate, and governed self-service data access without compromising security. Unlike a data lake or data lakehouse, which centralize raw storage, data fabric connects data in place across all environments without duplication.

Key Benefits of Data Fabric for Enterprise Data Management

- Faster Decision-Making: Real-time data access across all business units eliminates lag between data creation and business action.

- Reduced Engineering Overhead: Automated pipeline maintenance and schema drift detection free data engineers from reactive firefighting reclaiming 30–60% of capacity for value-creating work.

- AI & ML Readiness: Data fabric ensures AI models have continuous access to clean, current, governed data the single most common blocker for AI deployment.

- Unified Data Security: Centralized data security policies enforce GDPR, CCPA, HIPAA, and SOX obligations consistently across hundreds of systems and various data sources.

- Fast Time-to-Value: Modern no-code data fabric platforms can be operational in under a day with pay-as-you-scale pricing.

- Self-Service Data Access: Business analysts access governed data without submitting IT tickets, accelerating insight without adding headcount.

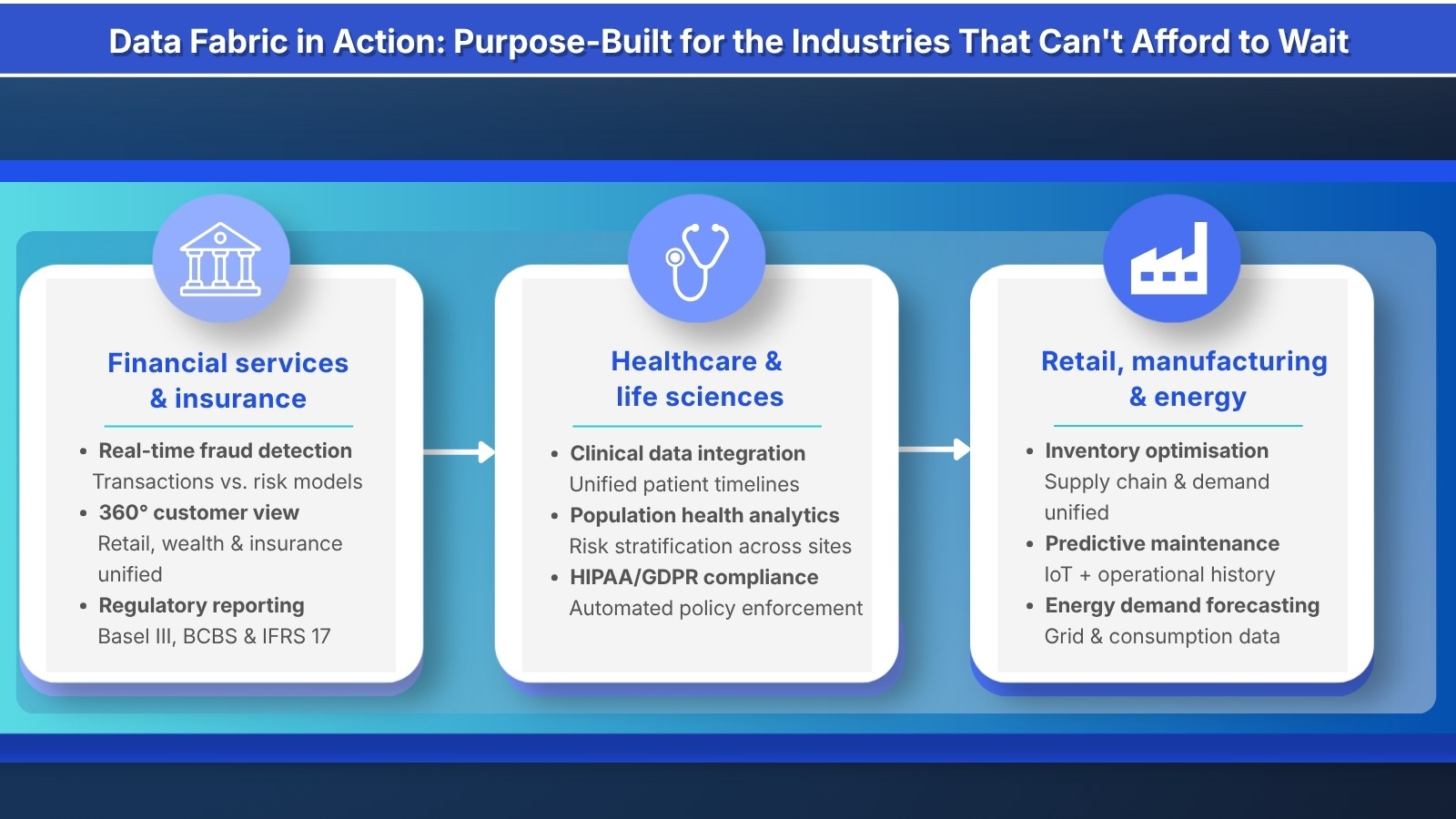

Data Fabric Use Cases Across Industries

The common thread across all data fabric use cases is a need for real-time, governed data access distributed across multiple systems, teams, or geographies.

Financial Services & Insurance

- Real-time fraud detection: Streaming transaction data compared against risk models in milliseconds.

- 360° customer view: Unified profiles across retail banking, wealth, and insurance enable personalized recommendations.

- Regulatory reporting: Automated data lineage simplifies Basel III, BCBS 239, Solvency II, and IFRS 17 reporting cycles.

Healthcare & Life Sciences

- Clinical data integration: Unified patient timelines across disparate EHR systems improve clinical decision support.

- Population health analytics: Aggregating data across care sites enables risk stratification and proactive intervention.

- HIPAA/GDPR compliance: Automated lineage and policy enforcement across all connected systems protects sensitive data.

Retail, Manufacturing & Energy

- Real-time inventory optimization: Supply chain and demand signals unified to reduce stockouts and overstock.

Implementing Data Fabric: Data Integration, Automation & a 6-Step Practical Guide

Modern data fabric solutions can be deployed incrementally using DataOps principles and no-code tools, starting with the highest-priority data domains. Automation drives every layer of data integration and governance, reducing manual effort at scale.

- Step 1 – Assess Existing Data Systems: Map your current data estate: identify all organizational data sources, owners, volumes, and update frequencies. Document integration points and known governance gaps.

- Step 2 – Define Data Products & Consumer Requirements: Define what data products you need to deliver and to whom before designing infrastructure.

- Step 3 – Design a Metadata-Driven Architecture: Build governance and data integration design around the metadata layer. Define classification taxonomy, access policies, and quality standards first.

- Step 4 – Establish Governance Frameworks: Stand up your data catalog, policy engine, access control framework, and audit logging. Ensure these operate automatically via automation not as manual checkpoints that create bottlenecks for data engineers.

- Step 5 – Connect Priority Sources & Validate: Deploy connectors to highest-priority sources. Validate data quality, test access controls, and confirm lineage tracking before expanding.

- Step 6 – Enable Self-Service & Scale: Open the platform to business analysts and data scientists with no-code tools and pre-built templates. Apply DataOps practices to maintain pipeline quality and deployment velocity at scale.

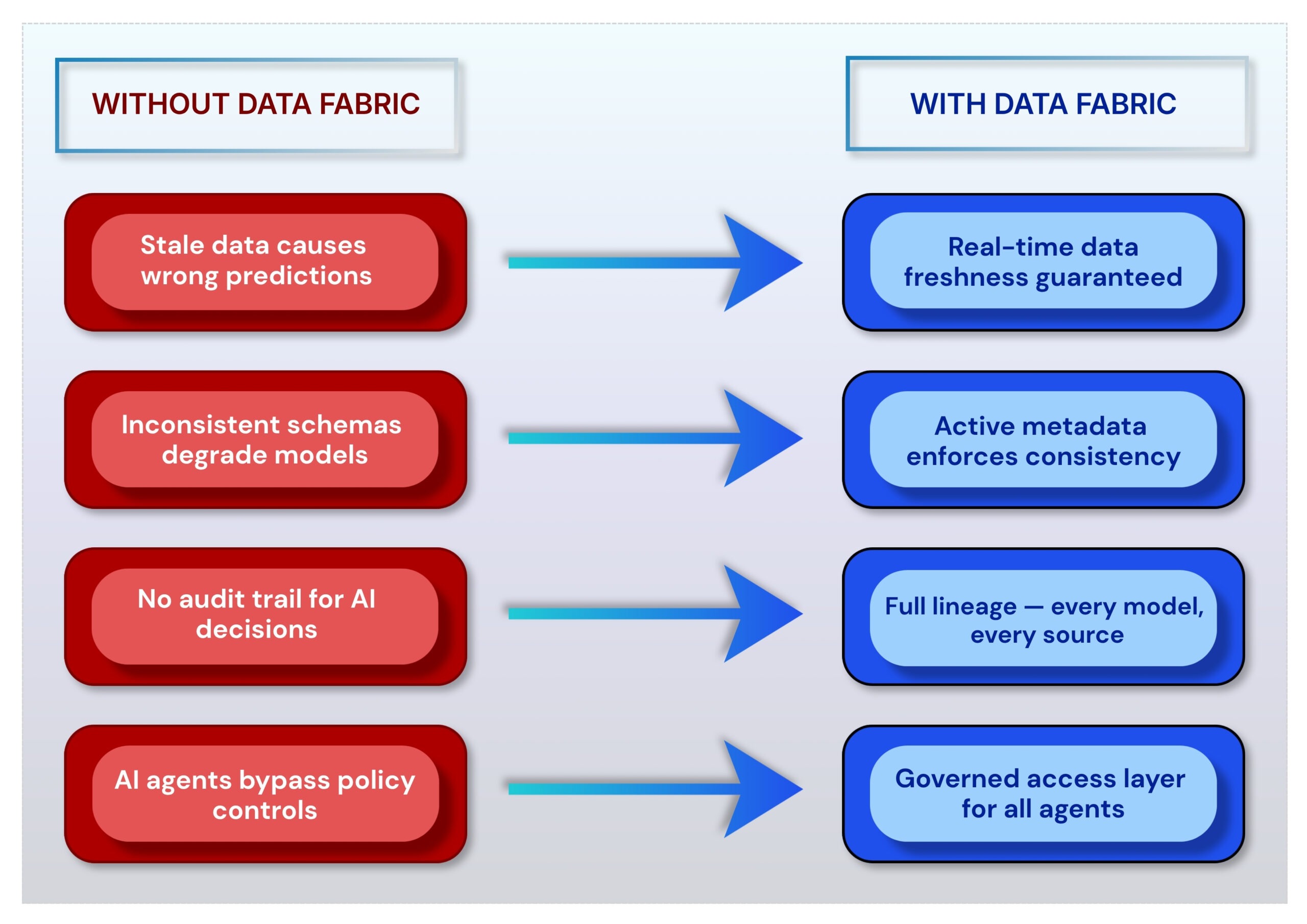

Data Fabric for AI Readiness

The promise of enterprise AI depends entirely on the quality, freshness, and governance of underlying data. Data fabric is not merely data fabric infrastructure it is foundational AI infrastructure. It addresses the four most common AI data readiness failures:

- Data freshness: AI models require current data. Stale data even by hours produces incorrect predictions in fraud detection, demand forecasting, or patient risk scoring.

- Cross-source consistency: Data fabric’s active metadata layer enforces semantic consistency before data reaches the model, even when drawing from various data sources.

- Governance for AI outputs: Data fabric’s lineage capabilities make AI decisions auditable at enterprise scale increasingly required by regulators.

- Agentic AI orchestration: Multi-agent AI systems need real-time data access with strong governance guardrails. Data fabric provides exactly this environment.

Arivonix integrates multi-agentic AI natively within the data fabric layer, enabling automated insights, autonomous pipeline management, and AI-driven data product generation.

Arivonix Data Fabric Platform: No-Code for Enterprise & Mid-Market

Arivonix is an Agentic AI and data fabric solution built for organizations that need to unify their data estate and generate real-time insights without a multi-year infrastructure programme. The platform covers every element of modern data fabric core capabilities from data ingestion and data processing to governance, data security, and monetization through a single no-code interface.

- Unified Data Integration: Connect structured, unstructured, streaming, and legacy data sources across cloud and on-premises through a single governed platform. Supports data ingestion from 250+ sources.

- Multi-Agentic AI: Native AI agents that automate data discovery, pipeline generation, quality monitoring, and insight delivery.

- Augmented Knowledge Catalog: An active data catalog powered by ML that automates classification, data discovery, lineage tracking, and quality scoring across all connected sources.

- No-Code Interface: Visual builder enables data product managers, data engineers, and business analysts to build integrations without writing code.

- 200+ Pre-Built Connectors: Maintained connectors across cloud platforms, SaaS apps, databases, and legacy systems reducing custom development to near zero.

- Built-In Data Security & Compliance: Policy-as-code data governance with templates for GDPR, HIPAA and SOC 2. Sensitive data is automatically identified and protected across all connected storage systems.

- Data Monetization: Tools to package, govern, and deliver data products for internal consumption or external commercialization.

- Speed to value: Pre-built connectors and no-code interface mean your first working integration can be live in under a day not 6 months.

- Scalable economics: Pay-as-you-scale pricing means no multi-year budget commitment. Start with one use case, prove ROI, then expand.

- AI-ready architecture: Multi-agentic AI is embedded natively not bolted on. As your AI strategy matures, your data infrastructure is already prepared.

- Data product monetization: Go beyond data access to enable data product creation and new revenue streams from assets you already own.

How to Evaluate Data Fabric Vendors: Data Governance, Data Access & Buyer’s Checklist

Look for platforms that combine enterprise-grade automation, intuitive no-code tools, and deep data governance not just connector count. These 8 criteria separate true data fabric platforms from integration middleware:

- Active Metadata Maturity: Does the platform actively monitor, score, and act on metadata in real time?

- Connector Breadth: How many pre-built connectors are available across various data sources, and are they vendor-maintained?

- Governance & Compliance Depth: Are GDPR, HIPAA, SOC 2 built-in as templates or configured from scratch?

- Pricing Model: Consumption-based or pay-as-you-scale reduces financial risk, especially for initial deployments.

- Microsoft Fabric Compatibility: For Microsoft-invested organizations, evaluate how the platform integrates with Microsoft Fabric connectors and governance layer

Explore More Resources

Frequently Asked Questions

What does data fabric mean in simple terms?

The definition of data fabric is a unified architecture that connects business data from disparate data sources across cloud, on-premises, and legacy environments. The result: business data is consistently accessible, governed, and ready for analytics and AI without requiring everything to move to a single location.

How does data fabric differ from data mesh?

Data mesh is an organisational model that decentralizes data ownership to domain teams using a decentralized data architecture. Data fabric is the technical infrastructure that provides shared integration, metadata management, and governance capabilities across all domains. They are complementary: data mesh defines who owns the data; data fabric defines how it is connected and governed.

What is active metadata in data fabric?

Active metadata is the intelligence layer that continuously monitors enterprise data assets assets tracking quality, lineage, relationships, and usage and uses that intelligence to automate data governance, integration, and data discovery. It powers an augmented data catalog and is the defining characteristic of modern data fabric platforms, as identified by Gartner.

How long does it take to implement data fabric?

Traditional enterprise implementations have historically required 6–18 months. Modern no-code platforms like Arivonix deploy initial environments in under one day using pre-built tools and automation, with full enterprise rollout measured in weeks. The key variable is connector availability and data governance complexity.

Is data fabric relevant for mid-market organizations?

Yes. No-code data fabric platforms have changed the economics of adoption. Organizations with as few as 50 data users and a multi-cloud or hybrid data estate can deploy cost-effectively with pay-as-you-scale pricing.

Ready to see Arivonix AI in action?

Start a free trial and deploy your first agentic data workflow in less than a day, with no upfront cost and no engineering prerequisite. Or book a demo for a personalized walkthrough.