When teams first start experimenting with AI, the focus usually lands on performance — how cleanly the model answers questions, and how quickly it generates outputs. That is completely understandable; those early days are all about discovering what is possible.

But production brings a different reality. As soon as AI systems move closer to real workflows, the questions that surface change.

- What actually happened here?

- Why did the decision go this way?

- How do we explain this outcome to a customer or regulator?

- Does the behavior hold up when the input looks nothing like our test data?

At that moment, AI governance stops feeling like an external compliance exercise. It starts to feel like the practical difference between something that can be trusted in daily operations and something that remains confined to controlled demos.

What enterprises navigating this transition consistently find

Across organizations moving from pilots to production, a consistent pattern emerges: governance rarely comes down to imposing rules for their own sake. It is about creating the conditions where artificial intelligence becomes genuinely usable and defensible at a meaningful scale. Responsible AI is not a checklist, it is the operating foundation that determines whether stakeholders, from regulators to internal teams, can trust what the system produces.

Forward-looking discussions on enterprise-grade Agentic AI including strategic playbooks looking toward 2026 point to the same insight: operational readiness depends far more on the structural characteristics of the surrounding AI systems than on model benchmarks alone. Organizations that invest early in a robust AI governance framework consistently outperform those that treat governance as a post-deployment patch.

Transparency becomes non-negotiable once people rely on the output

In controlled experiments, opaque behavior is easier to accept because humans are still in the loop reviewing every result. The moment AI systems run autonomously in production, that opacity turns into a real liability. It is not about revealing every internal weight or parameter; it is about basic visibility knowing which data shaped a particular decision, understanding how AI models have been trained and updated, and being able to follow the reasoning path from input to outcome. This level of traceability is not optional under frameworks like the EU AI Act is a baseline expectation, and part of what responsible AI development demands.

When visibility is present from the beginning

AI systems designed with traceability, lineage, and clear documentation tend to earn adoption much more quickly. Teams feel safer relying on them because the answers to “how did we get here?” are readily available rather than requiring forensic digging after the fact. The shift toward unified data governance practices — strong lineage, consistent integration, proper cataloging turns out to be less about modernization theater and more about creating a foundation that supports reliable, explainable action. This is precisely what Arivonix AI’s Data-Centric AI Assurance framework is built around.

Reliability shows up differently in the wild

Prototypes often perform beautifully on hand-picked examples. Production environments throw messy, incomplete, edge-case data at AI systems every day. Sustained reliability comes from operational practices rooted in AI ethics: risk management through anomaly detection, output guardrails, continuous monitoring for drift or unintended bias, and mechanisms to interpret unexpected behavior when it occurs. Treating quality, context, and oversight as core principles of the system, rather than after-market additions, reduces uncertainty in ways that feel like built-in assurance, not restriction. Ethical guidelines and ethical standards embedded at the design stage are consistently easier to maintain than those retrofitted after deployment.

Scalability reveals the cracks quickly

A single, transparent, reliable system is manageable. When usage spreads across multiple teams, departments, and use cases, manual oversight collapses under its own weight. What survives at scale is a governance framework that lives inside the pipelines: consistently enforced policies, versioned configurations, reproducible environments, and tight linkage between lineage, rules, and validation steps. Governance stops being something that happens after deployment and starts traveling with the system itself. For organizations operating under GDPR, CCPA, and evolving AI regulations, this embedded approach to regulatory compliance is not just good practice — it is a competitive requirement.

Security and observability especially with Agentic systems

Agentic AI introduces its own realities. These Agentic workflows expand the attack surface dramatically; they call external tools, access live data sources, and execute actions across boundaries. Traditional perimeter defenses quickly prove insufficient against that level of dynamism. AI security must be architectural, not peripheral, and it must account for the human rights and data rights of every individual whose information the system touches.

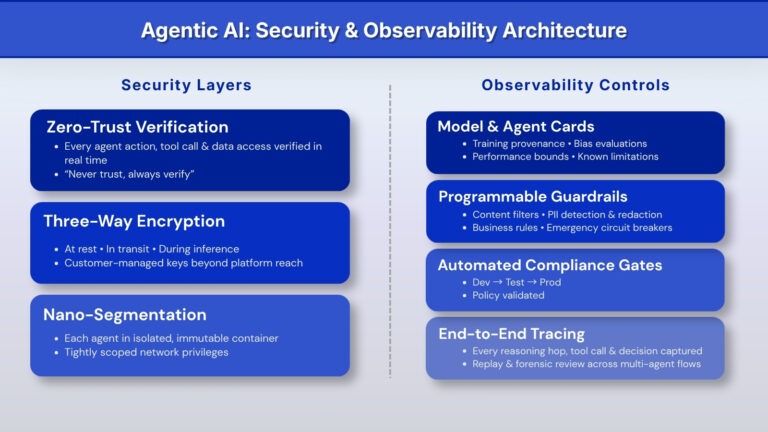

What is emerging in production-grade environments is a layered posture grounded in ethical governance and AI governance frameworks:

- Zero-trust verification applied to every agent action, tool invocation, and data access in real time (“never trust, always verify”).

- Three-way encryption that covers data at rest, in transit, and even during inference, using customer-managed keys that remain out of reach of platform operators.

- Nano-segmentation where each agent executes inside its own isolated, immutable container with tightly scoped network privileges, limiting blast radius if something is compromised.

On the observability side, without deliberate structure, agentic behavior can easily become opaque shadow IT. Arivonix AI addresses this through Standardized Model Cards and Agent Cards that capture training provenance, performance boundaries, bias evaluations, intended scope, and known limitations ensuring trustworthy AI at every layer. Programmable guardrails — content filters, PII detection and redaction, business-rule enforcement, emergency circuit breakers attach directly to agents. Deployment workflows include automated compliance gates covering risk and compliance checks, so promotion from dev → test → prod carries built-in policy validation. Full end-to-end tracing captures every reasoning hop, tool call, and decision across multi-agent interactions, making replay and forensic review possible when questions arise.

The bottom line

The transition from pilot success to production confidence rarely hinges on making AI models dramatically smarter. It hinges on whether the surrounding architecture — AI governance, AI security, and observability allows people to trust the system enough to let it run meaningfully. When those principles and guidelines are thoughtfully in place, artificial intelligence stops being an experiment and starts behaving like infrastructure: repeatable, accountable, and scalable across the organization. This is what responsible AI looks like in practice — not a policy document, but a living architecture built on clear ethical standards.

This is the architecture Arivonix AI is built to deliver end to end, out of the box.

Explore how Arivonix AI enables enterprise-grade Agentic AI →

This blog was first published on Medium.