Most enterprise architectures were built on a quiet assumption: systems act; people decide.

Agentic AI flips that, and it does so in ways that most existing security frameworks were simply not designed to handle.

AI agents now trigger workflows, access multiple systems, and execute consequential decisions without waiting for human approval. For data leaders, this rarely surfaces as an immediate security alarm. It tends to arrive as a design question:

Where do these agents live in the architecture?

How much autonomy is appropriate?

What happens when something goes wrong?

That framing is understandable. But here is what consistent experience across enterprise deployments makes clear:

- The design question and the security question are the same question.

- Organizations that recognize this early are the ones building with confidence.

- Those that treat it as a design question alone will be reacting after impact, not during the decision itself.

When the Perimeter Stops Being Enough

Traditional enterprise security was built around a manageable boundary. Data lived in known places. Applications had predictable behaviors. Humans made decisions; systems executed them.

That model was already under pressure from cloud, SaaS, and distributed APIs. Agentic AI does not just add more pressure; it changes the nature of what needs to be governed.

Autonomous agents do not simply access data. They act on it. They call external tools, cross system boundaries, and execute multi-step workflows within seconds. A single misconfigured or compromised agent can propagate failures across domains before any alert fires, not because security was absent, but because it was positioned at the wrong layer.

The exposure is not purely technical either. Every individual whose data an agentic system touches has rights that are increasingly codified under GDPR, CCPA, and the EU AI Act. Governance frameworks that treat this as a secondary concern quickly find it becomes a primary liability.

What Changes When Security Becomes Architectural

The organizations navigating agentic AI well are not the ones with the most sophisticated threat detection. They are the ones that stopped treating security as something applied to agentic systems and started treating it as a property of how those systems are built.

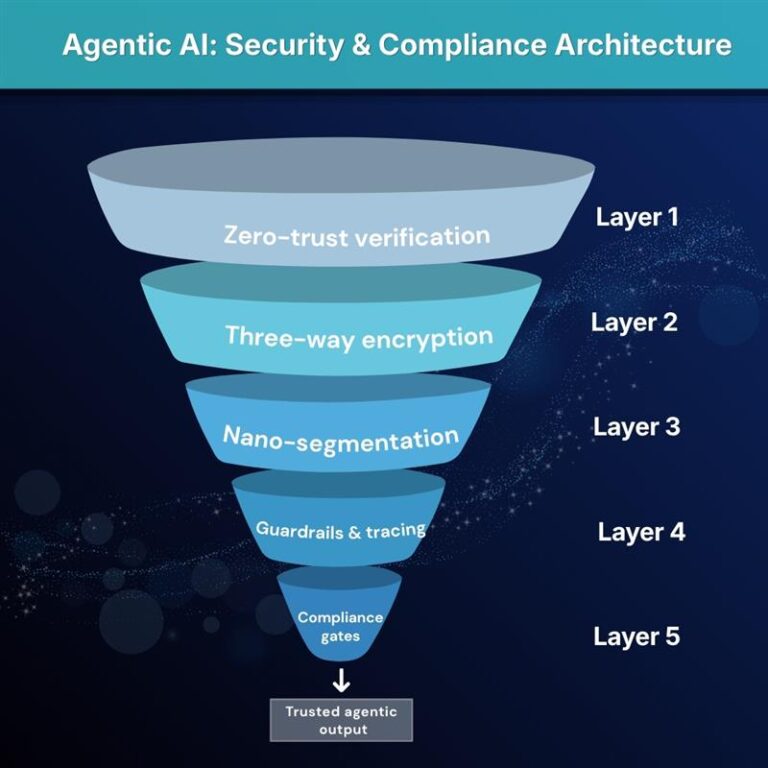

Three principles define what this looks like in production, and they are foundational to how Arivonix AI approaches enterprise agentic deployments:

- Zero-trust verification applied to every agent action, tool invocation, and data access in real time (“never trust, always verify”). Arivonix AI applies this at the agent runtime, so policy enforcement travels with the agent into production rather than waiting at the edge.

- Three-way encryption that covers data at rest, in transit, and even during inference, using customer-managed keys that remain out of reach of platform operators. Sensitive information stays under enterprise control even during the moments traditional security architectures never accounted for.

- Nano-segmentation where each agent executes inside its own isolated, immutable container with tightly scoped network privileges, limiting blast radius architecturally by design, not by reaction.

Observability Is the Part Most Enterprises Underestimate

Security and observability are often treated as separate concerns. With agentic AI, they are not. An agent that cannot be traced is an agent that cannot be governed, and an agent that cannot be governed cannot be trusted at production scale.

Without deliberate structure, agentic behavior quickly becomes opaque shadow IT. Arivonix AI addresses this through capabilities embedded into deployment from the start, not added after the fact:

- Standardized Model Cards and Agent Cards capture training provenance, performance boundaries, bias evaluations, intended scope, and known limitations. These are living records that make every agent’s behavior traceable and defensible at any point in its lifecycle.

- Programmable guardrails, including content filters, PII detection and redaction, business-rule enforcement, and emergency circuit breakers, attach directly to agents at runtime, functioning as part of how agents execute rather than as external checkpoints.

- Automated compliance gates built into every deployment pipeline, so promotion from dev to test to prod carries built-in policy validation. Risk and compliance checks happen before release, not after an audit surfaces a gap.

- Full end-to-end tracing that captures every reasoning hop, tool call, and decision across multi-agent interactions, making replay and forensic review possible the moment a question arises from a regulator, an auditor, or an internal team.

What Data Leaders Are Actually Optimizing For

Most data leaders navigating this transition are not trying to build perfectly secure systems in the abstract. They are trying to build architecture that holds up under real operational and regulatory pressure and that continues to hold up as agentic AI spreads across teams, departments, and use cases.

That usually comes down to three questions:

- Can we understand what an AI agent did and why?

- Can we contain failures without stopping everything?

- Can we meet compliance expectations without slowing teams down?

The organizations that answer yes to all three are consistently the ones that treated AI governance as an architectural property from the beginning. They built lineage, policy enforcement, versioned configurations, and reproducible environments before scale exposed the gaps.

For enterprises operating under GDPR, CCPA, and the EU AI Act, where 2026 marks the shift from advisory frameworks to enforceable compliance law, this embedded approach is no longer just good practice. It is a competitive and regulatory requirement.

Arivonix AI’s Data-Centric AI Assurance framework is built precisely around this reality. It does not add governance to agentic deployments; it embeds governance into how those deployments are constructed, operated, and audited, from infrastructure through to the full compliance trail.

The Rethink Is Already Underway

Agentic AI does not demand a complete architectural reset. It asks for something more precise: a clear-eyed rethink of where trust, control, and accountability actually live in the stack.

The teams moving fastest are not the ones with the most capable models. They are the ones who recognized early that production confidence comes from the surrounding architecture and built accordingly.

When AI governance, security, and observability are thoughtfully embedded from the start, agentic AI stops being an experiment and starts behaving like infrastructure: repeatable, accountable, and scalable across the organization.

That is what responsible AI looks like in practice, not a policy document, but a living architecture built on clear principles at every layer.

This is the architecture Arivonix AI is built to deliver, end to end, out of the box.

Whether you’re ready to dive in or just exploring your options, we’re here to help.

Start Your Free Trial | Book a Consultation

This blog was first published on Medium.