Most enterprise data programs were built on a single assumption: data informs decisions that people make.

Agentic AI breaks that assumption in ways most governance models were never designed to handle.

AI agents now retrieve context from warehouses, data lakes, SaaS applications, and unstructured repositories. Then they act on it, executing multi-step workflows across live systems without waiting for human approval. For data and technology leaders, this rarely triggers an immediate governance alarm. It tends to surface as an operational question:

- Which systems can agents access, and under what conditions?

- How do we ensure sensitive data is handled consistently across pipelines?

- What happens to auditability when decisions are made autonomously, at speed?

That framing is understandable. But experience across enterprise AI deployments makes one thing clear:

- The operational question and the governance question are the same question.

- Organizations that recognize this early build with confidence and control.

- Those that treat it as purely operational end up reacting to governance failures, not preventing them

When Autonomy Exposes What Governance Left Unfinished

Traditional data governance was built for a more manageable environment. Data lived in known systems. Classifications were applied at ingestion. Policies governed who could query what. Humans reviewed outputs before decisions were made.

That model was already under pressure from distributed cloud infrastructure and fragmented SaaS ecosystems. Agentic AI doesn’t add incremental pressure; it changes what governance is required to do.

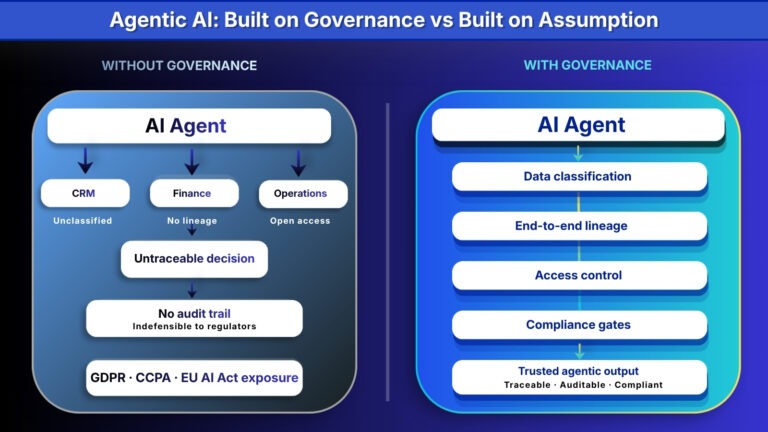

Autonomous agents don’t simply read data. They traverse it, combine it across sources, and act on it within seconds. A single inconsistency in how a business term is defined across sales, finance, and operations doesn’t just create confusion. It shapes the decisions an agent makes, at scale, with no human checkpoint in between.

Gaps that were tolerable when humans reviewed outputs become operationally significant when agents act autonomously. Inconsistent PII classification, incomplete lineage tracking, undefined access boundaries, each creating risk that scales directly with the autonomy of the system above it.

Governance as the Enabling Layer, Not a Constraint

The organizations deploying agentic AI with confidence are not the ones with the most sophisticated models. They are the ones that built governance into the data environment before autonomy arrived.

Three properties define what this looks like in production, and they are central to how Arivonix approaches enterprise AI governance deployments:

- Consistent classification from ingestion, so masking, transformation, and access rules apply uniformly across every pipeline. Agents inherit appropriate data boundaries by design, not by manual intervention, which matters most precisely when manual intervention isn’t available.

- End-to-end lineage that survives complexity, so that when an agent retrieves context from a versioned, metadata-enriched knowledge structure, the output is traceable. Organizations that structure internal content, including reports, transcripts, tickets, and wikis, into access-controlled knowledge assets consistently report clearer retrieval and greater team trust.

- Context-aware access control that follows the data, not just the perimeter. Policy enforcement travels with the data into every pipeline, every agent context window, and every downstream action, rather than sitting at the edge waiting for a boundary to be crossed.

The Compliance Dimension Organizations Are Underestimating

Governance programs that treat regulatory compliance as secondary quickly find it becomes a primary liability.

Every individual whose data an agentic system touches has rights that are increasingly codified. GDPR, CCPA, and the EU AI Act each impose specific expectations around transparent automated processing, the right to explanation, and demonstrable risk management. From 2026 onward, these are no longer advisory frameworks. They are enforceable law.

What makes agentic AI distinct from prior automation is the speed and opacity of its decision-making. An agent that cannot be traced cannot be governed. An agent that cannot be governed cannot be defended to a regulator, an auditor, or an internal stakeholder who needs to understand why a decision landed where it did.

The governance capabilities that make compliance defensible are specific:

- Immutable logs capturing every data access, agent action, and decision in the chain

- Provenance mechanisms that identify which data informed which output, at any point in time

- Automated compliance gates embedded in deployment pipelines, so policy validation happens before release, not after an audit surfaces a gap

- Full tracing across multi-agent interactions, making forensic review available the moment a question arises

What Data Leaders Are Actually Optimizing For

Most data and technology leaders navigating this shift aren’t chasing perfect governance in the abstract. They’re trying to build data environments that hold up under real operational and regulatory pressure, and that continue to hold up as agentic AI spreads across functions, teams, and use cases.

That consistently comes down to three questions:

- Can we trace exactly which data informed an agent action, and why?

- Was sensitive information involved, and was it handled according to policy?

- Can we meet compliance expectations without slowing teams down?

The organizations that answer yes to all three are consistently the ones that treated data governance as an architectural property from the start, building lineage, classification, policy enforcement, and versioned knowledge structures before scale exposed the gaps.

For enterprises operating under GDPR, CCPA, and the EU AI Act, where 2026 marks the shift from advisory to enforceable, this embedded approach is no longer just good practice. It is a competitive and regulatory requirement.

The Bigger Picture Taking Shape

A consistent theme has emerged across forward-looking enterprise strategies: data governance is no longer framed as something that slows innovation. It is increasingly recognized as the quiet enabler that makes autonomy scalable, defensible, and durable.

Arivonix’s Data-Centric AI Assurance framework is built around this reality. It doesn’t add governance to agentic deployments after the fact. It embeds classification, lineage, access control, and compliance into how those deployments are constructed, operated, and audited, from data ingestion through to the full decision trail.

Data governance doesn’t demand a complete architectural reset. It asks for something more focused: a clear-eyed assessment of whether the data environment underneath is coherent enough to support systems that act on it without human review at every step.

The teams moving fastest aren’t the ones with the most capable models. They’re the ones who recognized early that production confidence comes from the surrounding data architecture, and built accordingly.

When governance, security, and observability are embedded from the start, agentic AI stops being an experiment and starts behaving like infrastructure: consistent, traceable, and accountable across the organization.

That’s what responsible AI looks like in practice: not a policy document, but a living architecture built on clear principles at every layer.

Whether you’re ready to build in governance from day one or still mapping your current state, we’re here to help.

Start Your Free Trial | Book a Consultation

This blog was first published on Medium.